ITONICS Cloud Usability Test

Role

Test conducting

Research Analysis

UX design

Test conducting

Research Analysis

UX design

Team

a team of total 7 people

including product manager,

developers, one designer and intern

a team of total 7 people

including product manager,

developers, one designer and intern

Year

2019

2019

-

Reveal the friction points and confusing experience in the software

-

Uncover opportunities to improve the UX

- Test product concept and develop ideas with the target audience

- Users can have a smooth onboarding experience

- Users can add external user to the organization via invitation

- The filter and relate features are clear and easy to use

- User onboarding

- Basic content setup

- Content management

- In-person, moderated tests in order to understand user behavior. The tests are performed with the aid of a moderator and observers.

- With 5 participants, using the ‘think-aloud method’ with voice and screen recordings, as well as post-test questionnaires that participants filled out

- The tasks are presented with mini user story and scenarios to the participants

- Specific, answer-orientated tasks that we can then measure the user success rate. It provides us a quick and coarse metric about the usability

- Task success

- Time on task

- Errors

- Subjective satisfaction ratings(SUS)

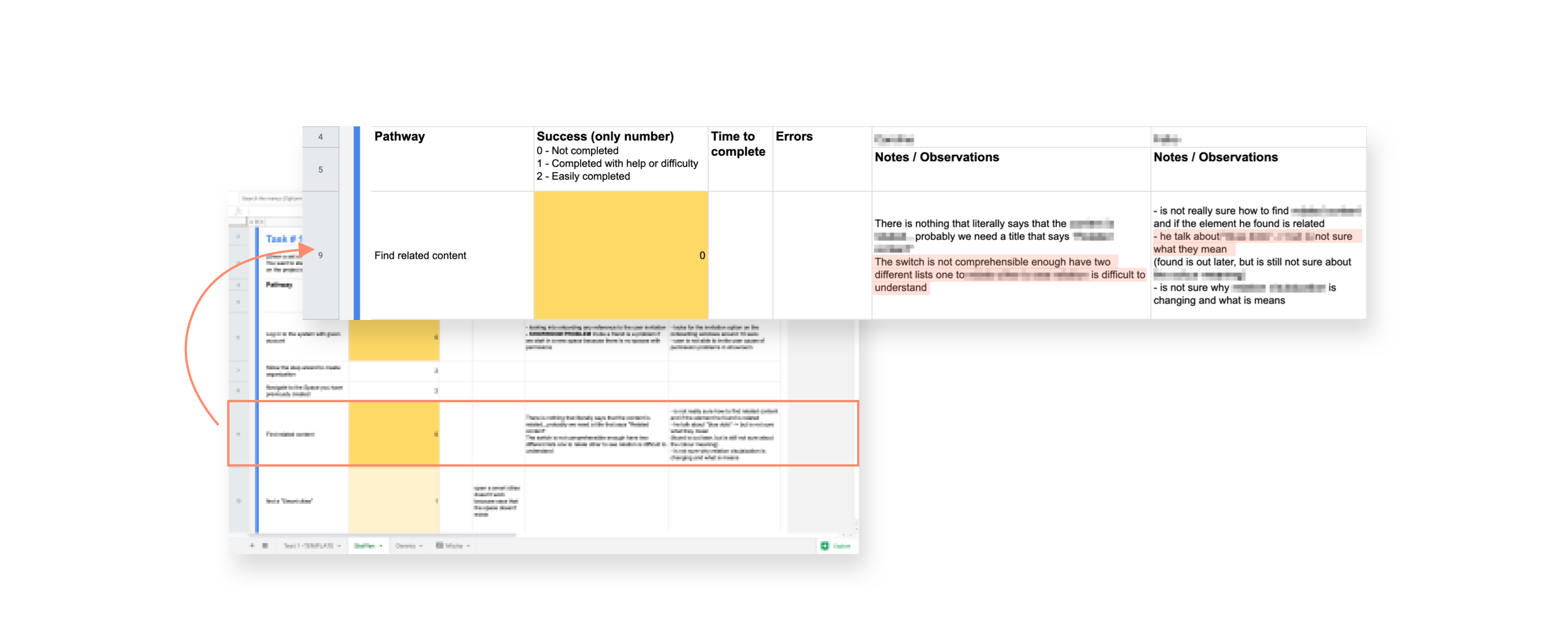

Google Sheets makes it easy for us to take notes and collect the results simultaneously. The task goal are stated at the top, followed by the notes.

- Happy-path:

The paths that we expect users to take in order to complete the task, which are pre-filled by us.

- Observations:

On the right-hand side are the empty cells for observers to fill in, to capture what actually happen when user taking this path. (see below)

After compare and aggregate all the results, we now have a list of UX problems that we uncovered through the test. We then translate the results into a UX debt spreadsheet. The issues are categorized by either module or component and apps. We write down the description, estimated time to fix, and priority after regular team meeting.

The higher the final score is, the more critical the problem is that needs to be fixed. The product manager will then addresse/ integrate the issues in our development process according to the list.

This list is constantly updated and revisited every time after the userability test. These issues are addressed and integrated into the Agile development process. We try to eliminate the debt by making them into tickets, in our backlog and make UX improvements.

- The importance of dry run.

Things like clear the cache, a user account with adequate permission, etc can make a difference when executing the test. A dry run provides an opportunity to check on these details.

- Standardize test process to ensure consistent and reliable results.

A standardized process also allows us to involve more company members in the test. It helps in promoting user empathy in the company.

- Refine test process.

I learned that the task order can be adjusted for an optimal process. Some easier tasks could be a warm-up for the user, while some tasks require presets which can be done via the previous tasks.